Today's applications need to serve users with personalized experiences, which means processing real-time data at scale. But real-time data-rich applications are inherently complicated -- they need to be always-on, scalable, and efficient, while storing and processing huge volumes of real-time data.

As a result, successful businesses are changing how they build applications. This shift primarily entails moving from monolithic architectures to distributed systems composed of microservices deployed in containers, and platform services such as message queues, distributed databases, and analytics engines.

Architectural Shift From Monolithic Architectures to Distributed Systems

The key reasons enterprises are moving to a distributed computing approach include:

- The large volume of data created today cannot be processed on any single computer. The data pipeline needs to scale as data volumes increase in size and complexity.

- Having a potential single point of failure is unacceptable in an era when decisions are made in real time, loss of access to business data is a disaster, and end users don't tolerate downtime.

To successfully build and operate fast data applications on distributed architectures, there are 6 critical requirements for the underlying infrastructure.

1. High availability with no single point of failure

Always-on streaming data pipelines require a new architecture to retain high availability while simultaneously scaling to meet the demands of users. This is in contrast to batch jobs that are run offline—if a three-hour batch job is unsuccessful, you can rerun it. Streaming applications need to run consistently with no downtime, with guarantees that every piece of data is processed and analyzed and that no information gets lost.

Today, applications no longer fit on a single server, but instead run across a number of servers in a datacenter. To ensure each application (e.g. Apache Cassandra™) has the resources it needs, a common approach is to create separate clusters for each application. But what happens when a machine dies in one of these static partitions? Either there is extra capacity available (in which case the machines have been over-provisioned, wasting money), or another machine will need to be provisioned quickly (wasting effort).

The answer lies in datacenter-wide resource scheduling. Machines are the wrong level of abstraction for building and running distributed applications. Aggregating machines and deploying distributed applications datacenter-wide allows the system to be resilient against failure of any one component, including servers, hard drives, and network partitions. If one node crashes, the workloads on that node can be immediately rescheduled to another node, without downtime.

2. Elastic scaling

Fast data workloads can vary considerably over a month, week, day, or even hour. In addition, the volume of data continues to multiply. Based on these two factors, fast data infrastructure must be able to dynamically and automatically scale horizontally (i.e. changing the number of service instances), and vertically (i.e. allocating more or less resources to services), up or down. And so data doesn't get lost, scaling must occur with no downtime.

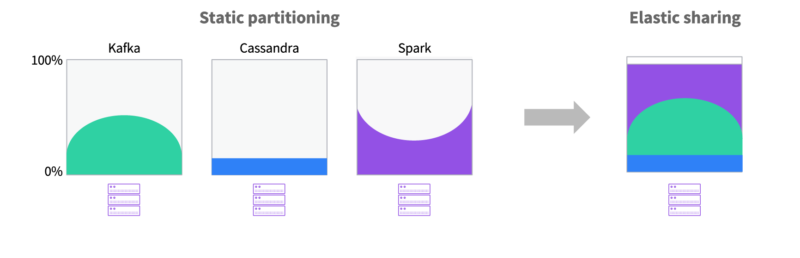

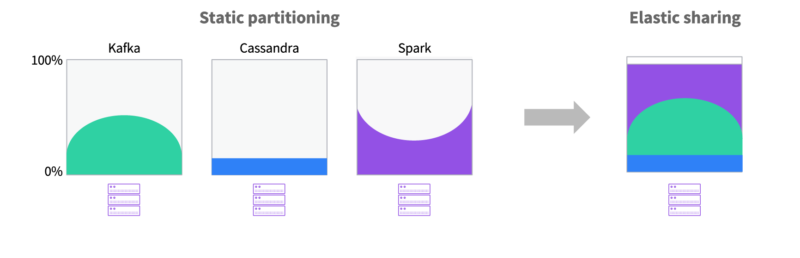

Elastic Resource Sharing Example

A shared pool of resources across data services facilitates elastic scalability, as workloads can burst into available capacity occupied in the cluster.

3. Storage management

Fast data applications must be able to read and write data from storage in real time. There are many kinds of storage, such as local file systems, volumes, object stores, block devices, and shared, network-attached, or distributed filesystems, to name a few. Each of these storage systems have different characteristics and each data service may require or support a different storage type.

In some cases, the data service by nature is distributed. Most NoSQL databases subscribe to this model and are optimized for a scale out architecture. In these cases, each instance has its own dedicated storage and the application itself has semantics for synchronization of data. For this use case, local, dedicated, persistent storage optimized for performance and resource isolation is key. Local persistent storage is "local" to the node within the cluster and is usually the storage resident within the machine. These disks can be partitioned for specific services and will typically provide the best in terms of performance and data isolation. The downside to local persistent storage is that it binds the service or container to a specific node.

In other cases, services that can take advantage of a shared backend storage system are better suited for external storage which may be network attached and optimized for sharing between instances. External storage in that case may be implemented in some form of storage fabric, distributed or shared filesystem, object store, or other "storage service."

4. Infrastructure and application-level monitoring & metrics

Collecting metrics is a requirement for any application platform, but it is even more vital for data pipelines and distributed systems because of the interdependent nature of each pipeline component and the many processes distributed across a cluster. Metrics allow operators to analyze issues across the data pipeline, including latency, throughput, and data loss. In addition, metrics allow organizations to gain visibility on infrastructure and application resource utilization, so that they can right size the application and the underlying infrastructure, ensuring optimum resource utilization.

Traditional monitoring tools do not address the specific capabilities and requirements of web scale, fast data environments. With no service-level metrics, operators cannot troubleshoot or monitor performance and/or capacity. Traditional monitoring tools can be adapted, but they require additional operational overhead for distributed applications. If monitoring tools are custom implemented, they require significant upfront and ongoing engineering effort.

To build a robust data pipeline, collect as many metrics as feasible, with sufficient granularity. And to analyze metrics, aggregate data in a central location. But beyond per-process logging and metrics monitoring, building microservices at scale also requires distributed tracing to reconstruct the elaborate journeys that transactions take as they propagate across a distributed system. Distributed tracing has historically been a significant challenge because of a lack of tracing instrumentation, but new tools such as OpenTracing make it much easier.

5. Security & access control

Without platform-level security, businesses are exposed to tremendous risks of downtime and malicious attacks. Independent teams can accidentally alter or destroy services owned by other teams or impact production services.

Traditionally, teams build and maintain separate clusters for their applications including dev, staging, and production. As monolithic applications are rebuilt as microservices and data services, the size and complexity of these clusters continue to grow, siloed by teams and the technology being used. Without multitenancy, running modern applications becomes exponentially complex, because different teams may be using different versions of data services, each configured with hardware expecting peak demand. The result is extremely high operations, infrastructure and cloud costs driven by administration overhead, low utilization, and multiple snowflake implementations (with unique clusters useable for only one purpose).

To create a multi-tenant environment while providing appropriate platform and application-level security, it is necessary to:

- Integrate with enterprise security infrastructure (directory services and single sign-on).

- Define fine-grained authentication and authorization policies to isolate user access to specific services based on a user's role, group membership, or responsibilities.

Ability to build and run applications on any infrastructure

Fast data pipelines should be architected to flexibly run on any on-premise or cloud infrastructure. For performance and scalability of fast data workloads, you need to have the choice to deploy infrastructure that meets the specific needs of your application. For example, the most sensitive data can be kept on-premises for privacy and compliance reasons, while the public cloud can be leveraged for dev and test environments. The cloud can also be used for burst capacity. Wikibon estimates that worldwide big data revenue in the public cloud was $1.1B in 2015 and will grow to $21.8B by 2026—or from 5% of all big data revenue in 2015 to 24% of all big data spending by 2026.

For such hybrid scenarios companies often find themselves stuck with two separate data pipeline solutions and no unified view of the data flows. While the choice of infrastructure is vital, a key requirement is a similar operating environment and/or single pane of glass, so that workloads can easily be developed in one cloud and deployed to production in another.

Mesosphere DC/OS: Simplifying the Development and Operations of Fast Data Applications

DC/OS solves the six critical requirements for fast data infrastructure:

- High availability: The core of DC/OS, Apache Mesos™, enables distributed systems to be pooled and share datacenter resources. Workloads are automatically restarted when a server fails.

- Elastic scalability: Pooling resources across a datacenter or cloud also enables elastic scaling, where workloads can scale up or down based on demand.

- Storage management: Support for persistent volumes that are resilient to restart and external volumes that migrate with the application.

- Monitoring & metrics: DC/OS provides out-of-the-box application-level monitoring and troubleshooting, so operators can easily troubleshoot or monitor performance and capacity.

- Security & access control: Built-in security features such as fine grained access control lists, secrets management, and integration with directory services (LDAP) and single sign-on solutions (SAML, OpenID connect) enable companies to isolate access to specific services based on a user's role, group membership, or responsibilities.

- Run on any infrastructure: Use DC/OS on bare-metal, virtual (vSphere or OpenStack) and cloud (AWS, Azure, GCE), for complete workload portability.

Sources:

• Big Data in the Public Cloud Forecast, Wikibon, 2016

Want to learn more?

This content is an excerpt from the O'Reilly eBook, "Architecting for Fast Data Applications." This book details the infrastructure requirements to build fast data applications, the challenges that arise, and the key technologies and tools required for success.