5 min read

Companies have used Apache Mesos and the Mesosphere Datacenter Operating System to do all sorts of things, from continuous code deployment at Yelp to running Siri at Apple. Now, they can add GPU-accelerated applications such as deep learning, image and video processing, speech and natural language processing, graph analytics and much more to that list—because thanks to an engineering partnership between Mesosphere and NVIDIA, Mesos now supports GPUs.

By supporting GPUs, we're giving users the opportunity to take advantage of the types of data-processing and computing techniques that will underpin tomorrow's most-popular applications. These include processing the deluge of video, audio and other data streaming in from mobile phones, drones and cars, as well as advanced types of machine learning to help us analyze all that rich data and build intelligent products around it.

Deep learning is a particularly popular form of machine learning that many of us actually consume every day, including when we use things like:

- Voice search on our mobile phones or other devices

- Skype Translate

- Digital assistants such as Apple Siri and Microsoft Cortana

- Autocorrect on text message apps

- Search features on photo apps such as Google Photos and Flickr

- Recommendation engines on services such as Netflix and Spotify

And we're only at the cusp of the new artificial intelligence revolution. Google CEO Sundar Pichai was dead serious when he said during the company's recent earnings call, "Machine learning is a core, transformative way by which we're rethinking everything we're doing." Google, Facebook, Microsoft, Apple, Baidu, Twitter, IBM and other large tech companies have already invested billions in deep learning and will continue to do so.

As consumers get used to intelligent experiences while surfing the web or using their mobile devices, they're going to start expecting intelligent experiences everywhere. These new approaches to machine learning are already powering major advances in fields ranging from self-driving cars to household robots.

Powering it all with GPUs

The promise of meaningful AI applications has brought GPUs out of supercomputing centers—where they historically handled workloads such as graphics rendering or resource-intensive simulations—and into enterprise datacenters. The parallel nature of GPUs makes them ideal for these new video-processing and machine learning workloads, because there's lots of complex data to process and complex algorithms to compute, and CPUs just cannot keep up.

At this point, in fact, GPUs are the go-to hardware choice for many deep learning systems, including at Google, Facebook and Baidu. Most popular open source deep learning software libraries are optimized for GPUs, and NVIDIA has worked to optimize its CUDA programming model for deep learning. Even Apache Spark, which is a core piece of Mesosphere's big data vision, lets users take advantage of GPU acceleration for machine learning jobs.

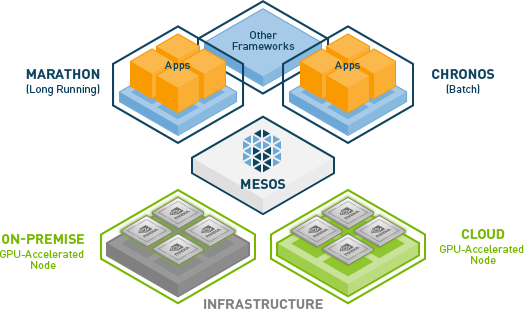

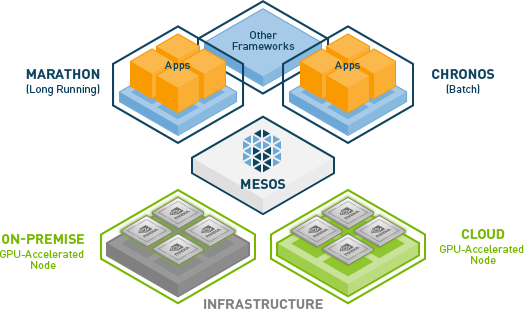

Credit: NVIDIA

The big benefit of running GPU workloads on the DCOS is that it is indeed an operating system for datacenter-scale applications. Broadly speaking, this means users have the ability to create massive shared clusters capable of running everything from code tests to high-performance computing workloads. Users install (or build) the services they want to run and tell the DCOS how many resources they need. The DCOS then makes sure services are deployed on the right type of machine with the right amount of resources.

Thanks to our work with NVIDIA, Mesos will treat GPU resources the same way it treats CPU and system memory resources. They will be aggregated across the cluster and treated, essentially, as one big GPU. The result is that data scientists and machine learning experts can focus on their models and applications rather than on operating distributed systems or worrying about resource specifications.

It's yet another step along the path toward enterprise IT simplicity and efficiency that Mesosphere has been pushing for more than two years. Mesosphere's DCOS already makes it simpler than ever to deploy the big data systems that power modern stream-processing and analytics workloads for efforts such as business intelligence and the Internet of Things. Our support for container technologies such as Docker and Kubernetes means DCOS users can take advantage of modern application architectures and code-deployment practices.

And now, our partnership with NVIDIA to bring GPU support to Mesos and the Datacenter Operating System means users don't have to be left out of the revolutions happening around user-generated content, ubiquitous video and machine learning. They can focus on finding the right applications and building the right models, and we'll take care of operating the infrastructure that powers it all.