Since the beginning, our goal for DC/OS was to provide application developers and operators with an easy way to consistently deploy and run applications and data services on any public and private infrastructure. The unified developer and operator experience allowed our customers to easily migrate applications across clouds, and made it easy to use one cloud or datacenter for testing, a second for production, and a third for disaster recovery.

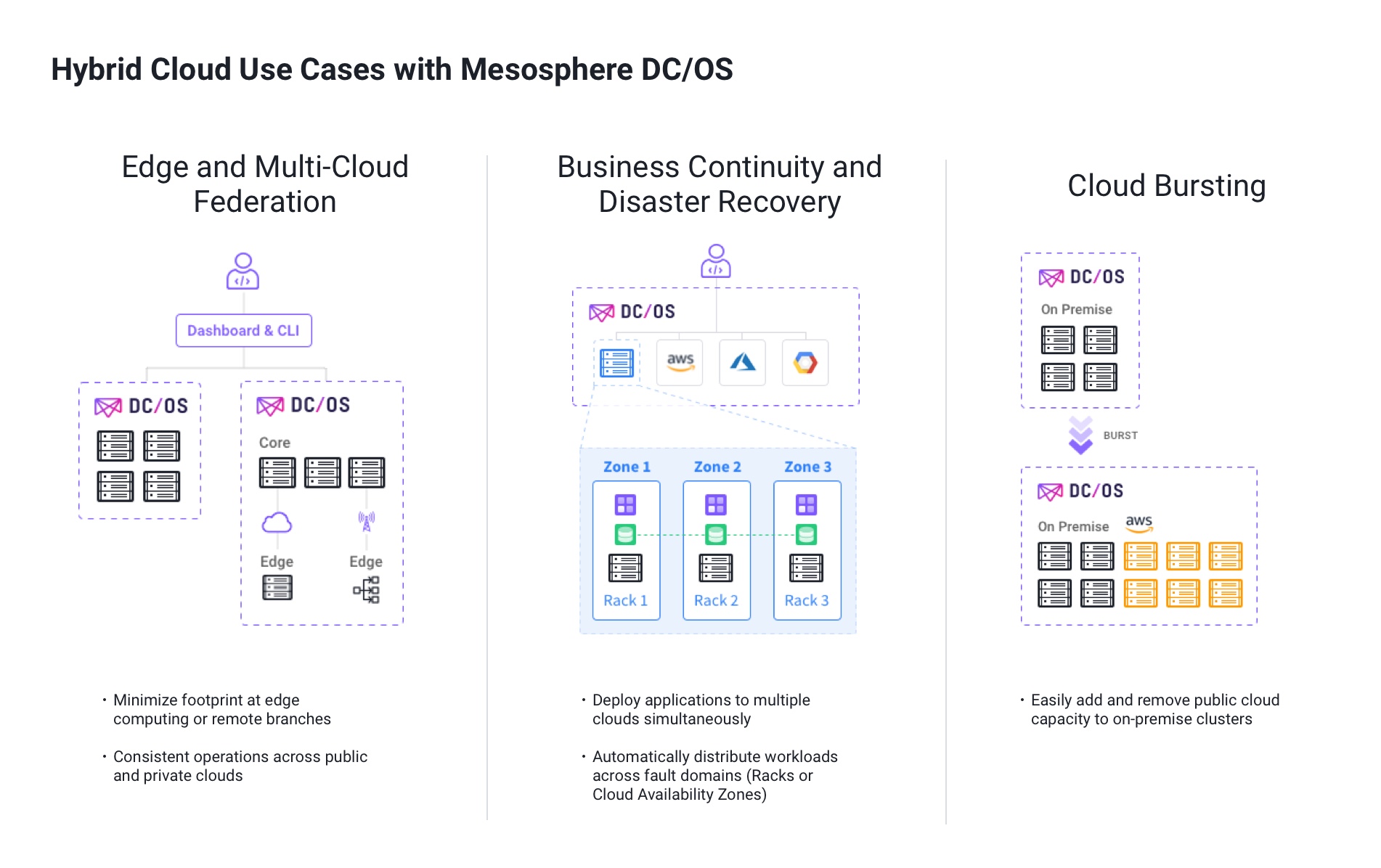

DC/OS 1.11 enhances and extends previous hybrid cloud functionality to unlock powerful use cases such as:

- Edge and Multi-Cloud Federation: A single DC/OS cluster can now stretch beyond local nodes, which means remote offices or edge computing infrastructures can be centrally managed while maintaining a minimal footprint. In addition, multiple DC/OS clusters can be linked to simplify management and operation.

- Business Continuity/Disaster Recovery: In addition to being resistant to machine failure, DC/OS 1.11 allows you to build applications that are resistant to outages within racks, availability zones or even cloud regions. You can even deploy multiple instances of your application across cloud providers.

- Cloud Bursting: Easily expand your on-premise infrastructure with additional capacity from public cloud providers, remove capacity when not needed, and manage it all from a single unified interface.

To accomplish the above, DC/OS 1.11 introduces many features such as:

- Regions and Zones support

- Multi-cluster Linking

- Other enhancements to simplify operations

Let's dive into these capabilities and see how they work together to achieve a hybrid cloud experience.

DC/OS 1.11: Regions & Zones

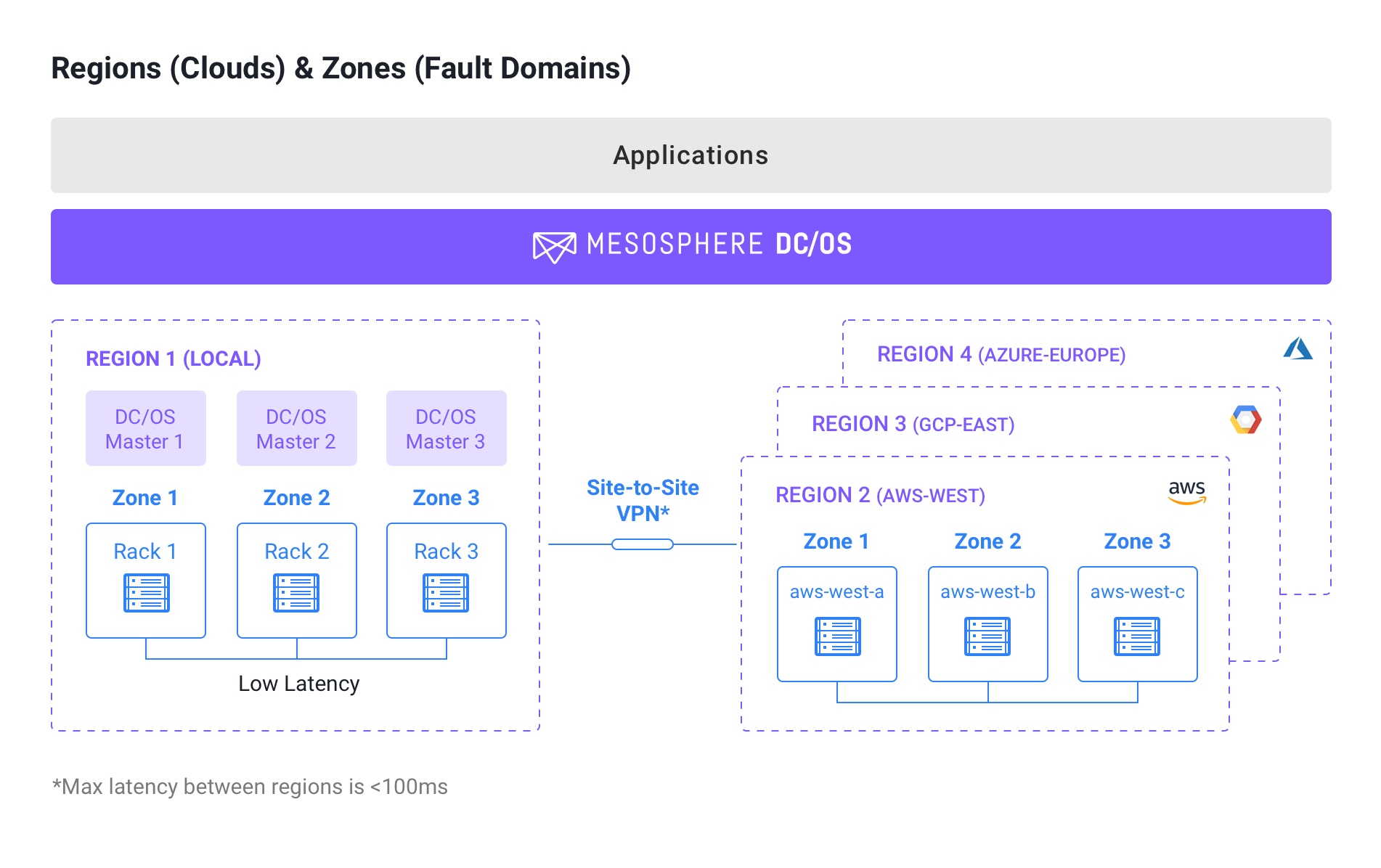

DC/OS introduces a two-level hierarchical grouping for your physical/virtual/cloud server pool: Regions and Zones. A Region represents all server instances in a specific datacenter or a cloud region (i.e AWS-West). A Zone represents a fault domain within each region, for example, a rack in a datacenter or cloud availability zone (AWS-West-A).

Regions makes it easy to manage different cloud environments while Zones make it easy to automatically manage and deploy application applications across fault domains. Let's look at them into more detail.

Regions

Regions makes it easy to manage one large DC/OS cluster across multiple clouds. DC/OS supports multiple regions in one cluster, and introduces the concept of (one) local and (multiple) remote regions. The local region is the region running the master nodes and agent nodes while the remote region contains only agent nodes. Mesos master nodes must be in the same local region due to network latency requirements. They should, however, be spread across zones within a region for fault tolerance (see later for more details).

Customers can use a single DC/OS GUI or CLI instance to deploy applications based on available resources or business requirements to a specific region. Applications that don't specify a region will be deployed to the local region by default.

Regions are expected to have latency no greater than 100ms. This is usually adequate to have a datacenter spread between US East and West coast or between US East coast and Europe. By default, DC/OS considers connectivity to a remote node to be lost after 10 minutes of inactivity. Users can configure remote agents with a higher timeout, making sure that applications in the remote regions remain alive until connectivity is restored.

Zones

Zones make it easy to deploy applications across fault domains in your datacenter or cloud to increase application uptime. A fault domain is a section of the datacenter or a cloud that is vulnerable to damage if a critical device or system fails. All server instances within a fault domain share similar failure and latency characteristics. All application instances in the same fault domain are affected by failure events within the domain. Placing server instances in more than one fault domain reduces the risk that a failure will affect them all.

Prior to Zones, DC/OS provided high availability and automatic failover against hardware or virtual machine failure. Distributed data services like Kafka, Cassandra, Elastic, or HDFS were automatically deployed across multiple server instances to avoid major failure in the event of hardware or virtual machine failure. Zones takes this concept to the next level, and allows applications to be distributed across fault domains, further increasing uptime.

For an on-premise datacenter, Zones can be manually defined according to business requirements or datacenter layout. A common approach is to use a server rack or a group of racks as a Zone boundary. Public cloud providers identify fault domains as availability zones within each cloud region. DC/OS automatically detects the region and the availability zones for the top 3 public cloud providers (AWS, Azure, GCP) during installation without the need for any configuration from the user.

DC/OS 1.11: Cluster Linker

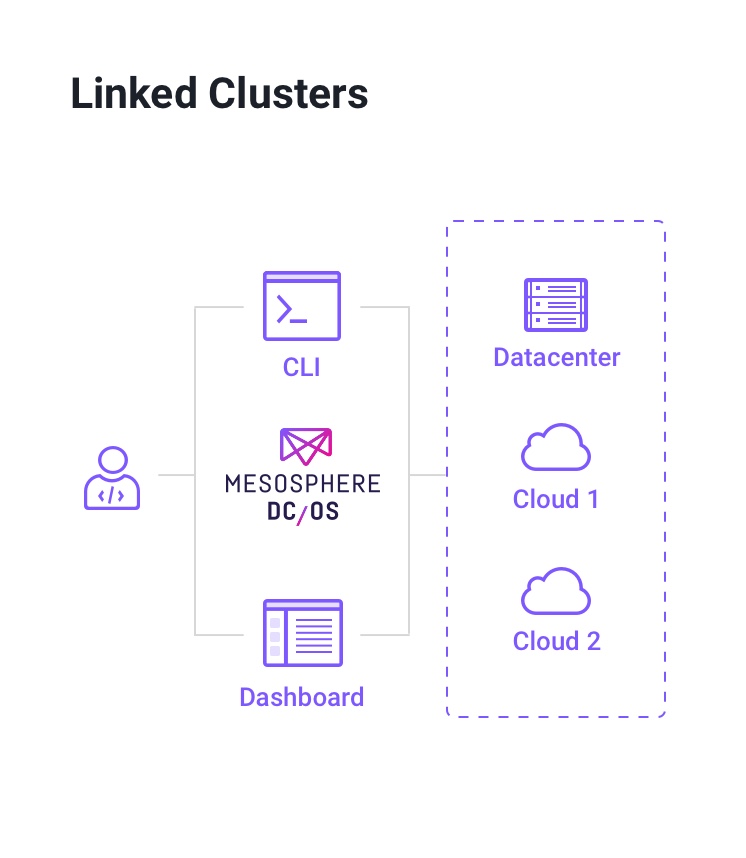

DC/OS also introduces cluster linking to simplify administration of multiple DC/OS clusters. Organizations sometimes have different DC/OS clusters across multiple datacenters and clouds for different functions such as for dev/test, edge cloud, or remote branch. Clusters can be linked together, and users in the same organization can manage multiple clusters through a single interface. Customer using a single-sign-on solution such as SAML or OpenID Connect will only have to log in once to manage any linked clusters.

DC/OS 1.11: Other enhancements for simplified cloud operations

To improve the operator experience, we've also provided many more capabilities such as Marathon app support, simplified node addition and removal, and automatic detection of Regions and Zones:

Marathon Support

Marathon support for hybrid cloud allows operators to have fine grained placement policies across Regions and Zones . Operators can now specify whether they want the application to be deployed to a specific Region (cloud provider availability zone) or distributed across (specific) Zones for high availability.

Marathon defaults application deployment to the local Region if no remote Region is specified in order to avoid an application being deployed to remote Regions.

SDK-based Data Services Support

Certified data services in the Mesosphere DC/OS Service Catalog have been updated to support deployment across Zones. Customers can use Marathon-style constraints to identify that a data service should be deployed automatically across Zones. Customers can also specify zones in which they would like their application to be deployed for more fine-grained control. Note that it is not recommended to deploy data services across Regions given the expected higher latency between them. Our team will continue working with our partners to enhance additional data services to these new and exciting capabilities.

Simplified node addition/decommission

DC/OS 1.11 also makes it easy to add nodes to and remove nodes from the cluster. You can easily add nodes to a specific Region by installing DC/OS on any new resources using any of your favorite automation tools such as Chef, Puppet, Ansible, Terraform, or even Bash scripts. Node decommission is also simplified through the CLI with a simple dcos node decommission command. Note that this command only removes the node from the DC/OS cluster, and does not tear down the physical or cloud machine, which you will need to do by yourself to avoid consuming unused resources.

Automatic detection of cloud regions and availability zones

At node installation, DC/OS communicates with the 3 major public cloud provider APIs (AWS, Azure, GCP) to identify the region and availability zone of the node. DC/OS then tags the node automatically with the appropriate labels for simplified administration. This feature can be enabled or disabled if desired.

DC/OS 1.11 Makes Hybrid Cloud a Reality